- FREE WINDOWS MONITOR NETWORK FOR PACKET LOSS HOW TO

- FREE WINDOWS MONITOR NETWORK FOR PACKET LOSS DRIVER

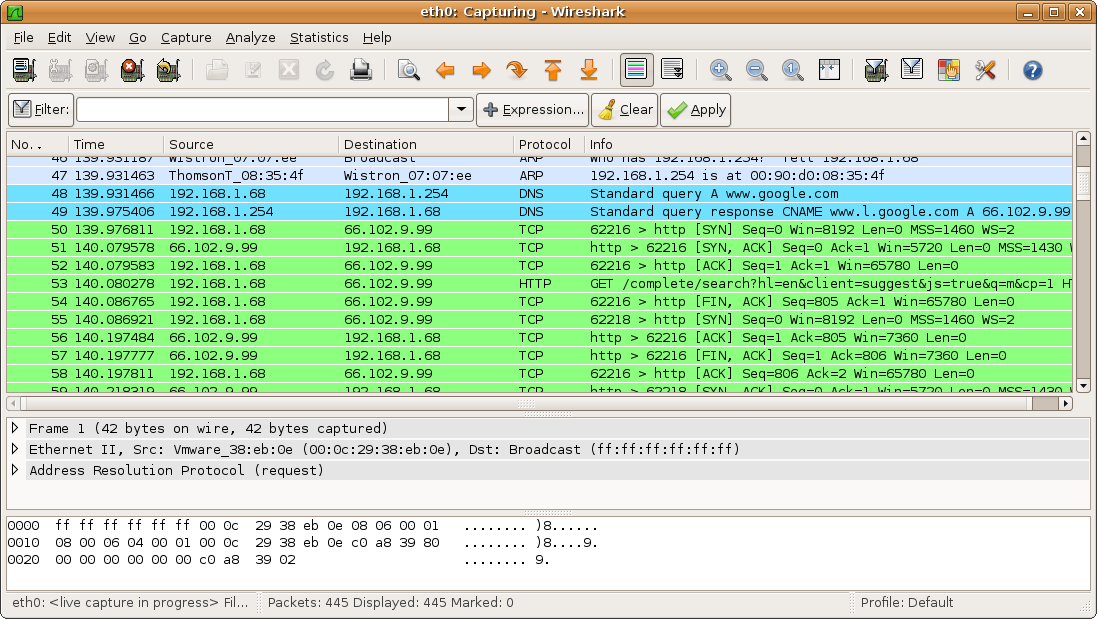

This helps to ensure one side is especially RX heavy. The benefit of using iperf over other tools and methods is that it does not need to write or read anything from disk for the transfer – it simply sends/received TCP data to/from memory as quickly as it can.Īlthough I could have done a bi-directional test, I decided to use one machine as the sender and the other as the receiver. To generate large amounts of TCP traffic between the two machines, I used iperf 2.0.5 – a favorite that we use for network performance testing. From a virtual hardware perspective, both have a single VMXNET3 adapter, two vCPUs and 1GB of RAM.Īlthough VMware Tools is installed in these guests, they are using the ‘-k’ distro bundled version of the VMXNET3 driver. Both are very minimal deployments with only the essentials. To run this test, I used two VMs with Debian Linux 7.4 (3.2 kernel) on them. This removes all physical networking and allows the guests to communicate as quickly as possible without the constraints of physical networking components.

To do this, I simply ensured the two test VMs were sitting on the same ESXi host, in the same portgroup.

There weren’t any easily reproducible ways to do this with 1Gbps networking, so I looked for other ways to push my test VMs as hard as I could. To demonstrate ring exhaustion in my lab, I had to get a bit creative. This is what is known as buffer or ring exhaustion.

FREE WINDOWS MONITOR NETWORK FOR PACKET LOSS DRIVER

If that buffer fills more quickly than it is emptied, the vNIC driver has no choice but to drop additional incoming frames. During periods of very heavy load, the guest may not have the cycles to handle all the incoming frames and the buffer is used to temporarily queue up these frames. Not unlike physical network cards and switches, virtual NICs must have buffers to temporarily store incoming network frames for processing.

FREE WINDOWS MONITOR NETWORK FOR PACKET LOSS HOW TO

Today I hope to take an in-depth look at VMXNET3 RX buffer exhaustion and not only show how to increase buffers, but to also to determine if it’s even necessary. The loss may not be significant enough to cause a real application problem, but may have some performance impact during peak times and during heavy load.Īfter doing some searching online, customers will quite often land on VMware KB 2039495 and KB 1010071 but there isn’t a lot of context and background to go with these directions.

I’ll quite often get questions from customers who observe TCP re-transmissions and other signs of packet loss when doing VM packet captures. Dealing with very network heavy guests, however, does sometimes require some tweaking. Guests are able to make good use of the physical networking resources of the hypervisor and it isn’t unreasonable to expect close to 10Gbps of throughput from a VM on modern hardware. ESXi is generally very efficient when it comes to basic network I/O processing.